Language and Perception for Robotics

Projects | | Links:

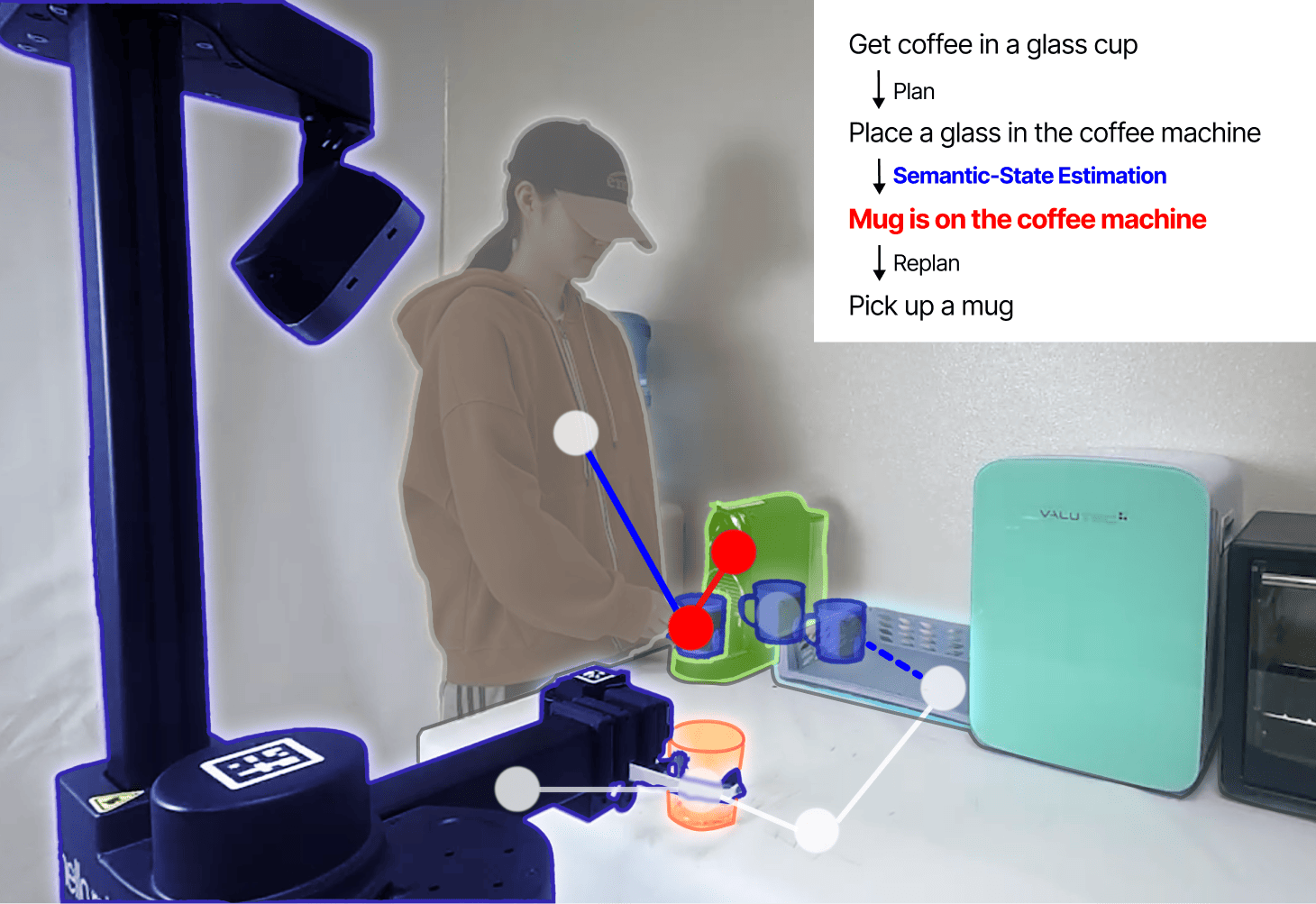

Open-Set Semantic-Set Estimation with Large Multimodal Models (Work in progress for RA-L 2025)

Problem Formulation

- Problem: Identifying semantic states of the environment without predefined task domains

- Challenge: Estimated states need to be relevant to task and consistent for downstream applications.

- Solution: A graph-based prompting method that integrates the semantic-state space of a task and the latest semantic state into the inference process by Large Multimodal Models (LMMs)

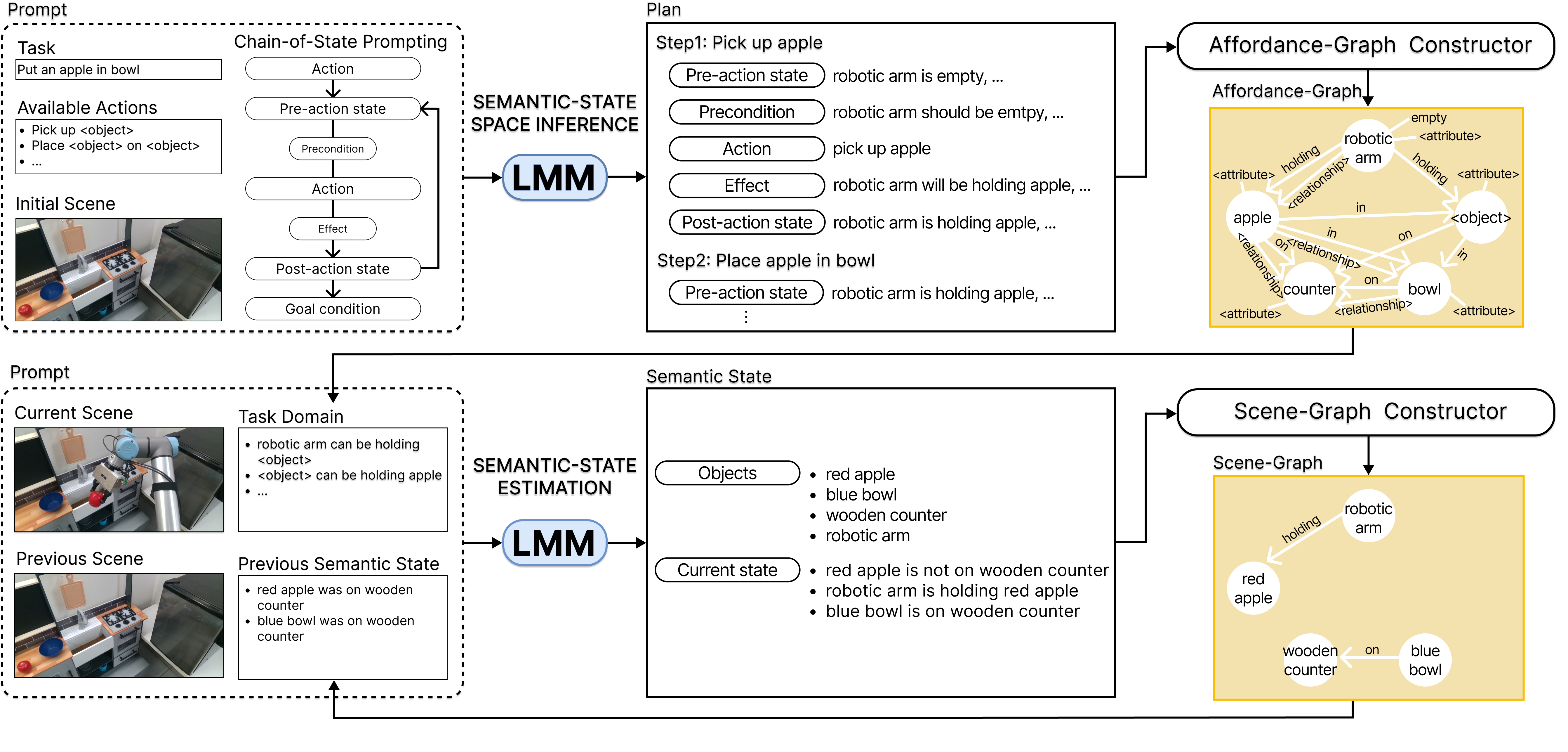

Approach

- Two-step approach: (1) Deriving the task’s semantic-state space through LMM-based task planning and (2) Using an LMM to estimate the scene’s state within that space

- Chain-of-State prompting: A prompting method to guide LMMs in generating task plans by inferring sequential state transitions, inspired by classical planning

- Affordance Graph: A structured representation of the semantic-state space, derived from processing task plan

- Scene Graph: A structured representation of the latest semantic state, constructed by processing estimated states

- EBNF-formatted prompts: Formal metalanguage-based prompts enabling zero-shot inference in both steps

Results

- Statistical evaluation: Improvement of semantic state estimation accuracy by up to 122% and consistency by 70% on 30 real-world manipulation videos, compared to baselines and ablations

- Real-world demonstrations: Validation of robustness of the approach through human interruption scenarios, showcasing its effectiveness for adaptive task execution

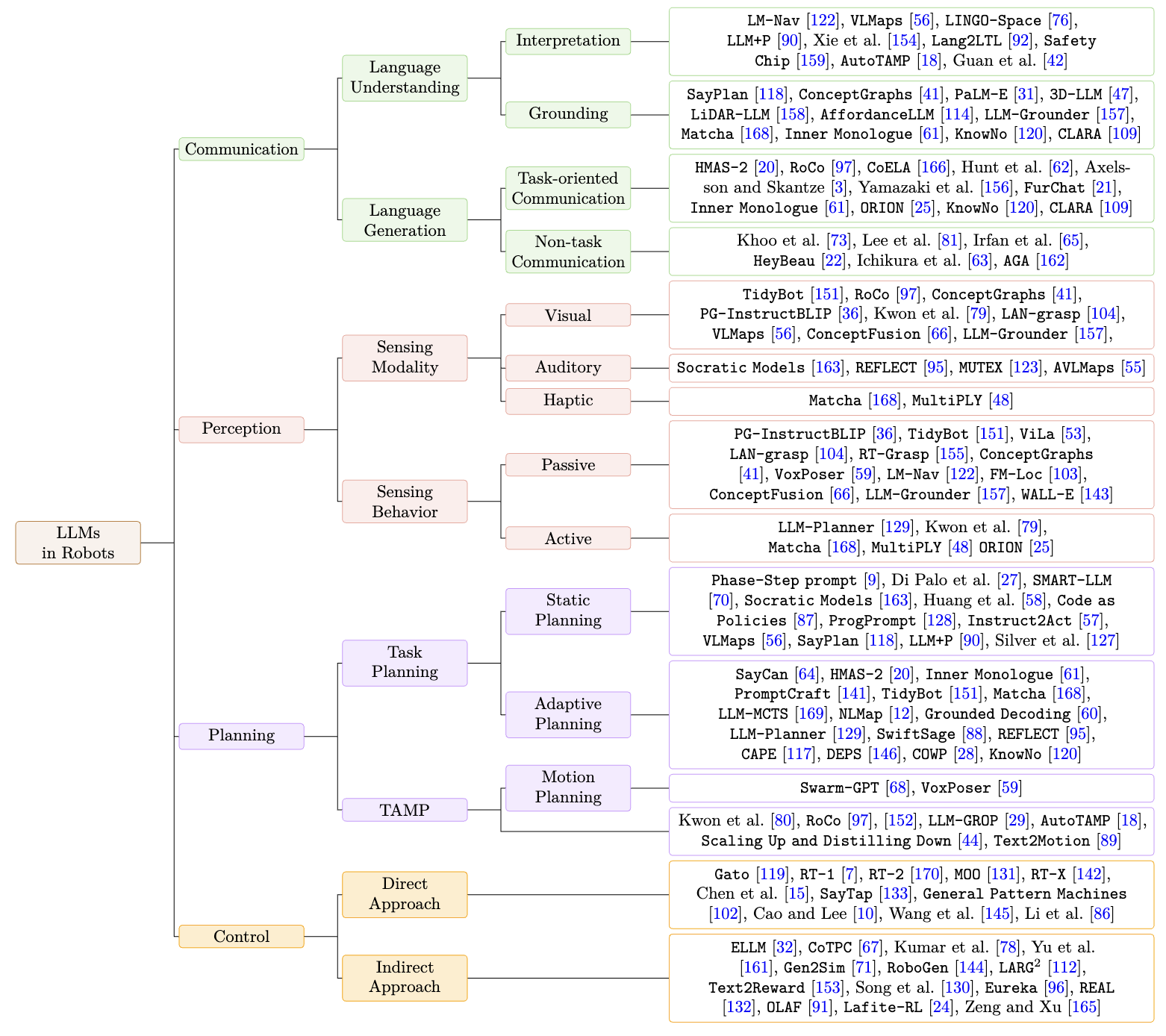

A Survey on Integration of Large Language Models with Intelligent Robots (ISR 2024)

Contribution

- Taxonomy Proposal: Categorized large language model (LLM) applications in robotics by core elements: communication, perception, planning, and control

- Communication Section: Analyzed how LLMs enhance robots’ communication capabilities in language understanding (interpretation and grounding) and language generation (task-oriented and non-task communication)

- Prompt Guidelines: Provided guidelines and an example of a conversation prompt to achieve interactive grounding

Key Insights

- The capability of LLMs to interpret and process formal languages

- The necessity of a semantic world model for grounding natural language in LLMs

- The importance of auxiliary knowledge to complement the commonsense knowledge in LLMs