Speech Interaction

Projects | | Links:

Voice Command Interface for Language-guided Navigation

Problem Formulation

- Problem: Language-guided navigation for mobile manipulators and quadrupeds

- Challenge: Robust speech command recognition and seamless integration with navigation frameworks

- Solution: A real-time voice command framework by integrating the NVIDIA Riva ASR engine with ROS and an RViz Plugin

Approach

- Deployed NVIDIA Riva Citrinet ASR on Jetson Orin AGX, achieving sub-second speech recognition performance in edge computing environments

- Integrated the navigational goal grounding and path planning algorithm into the ROS framework

- Improved robustness through a human-in-the-loop validation process that enables visualization and manual correction of speech recognition results

- Designed a dynamic interface that intuitively displays speech recognition and communication statuses, enhancing system robustness

- Implemented configurable options for setting speech command recognition locations and languages to adapt to various command environments

Results

- Led the setup and operation of a language-guided navigation demonstration for the KAIST Institute Meta-Research Project using Hello Robot Stretch 2

- Delivered five successful language-guided navigation demonstrations with Boston Dynamics Spot

Inter-linguistic Composition for Second-Langauge Pronunciation (under review at NAACL 2025)

Problem Formulation

- Problem: Low recognition rates of second-language (L2) speakers’ pronunications by automatic speech recognition (ASR) models

- Challenge: Unconscious substitution of unfamiliar L2 phonemes with similar L1 phonemes, even though native speakers of the target language perceive these phonemes as distinct

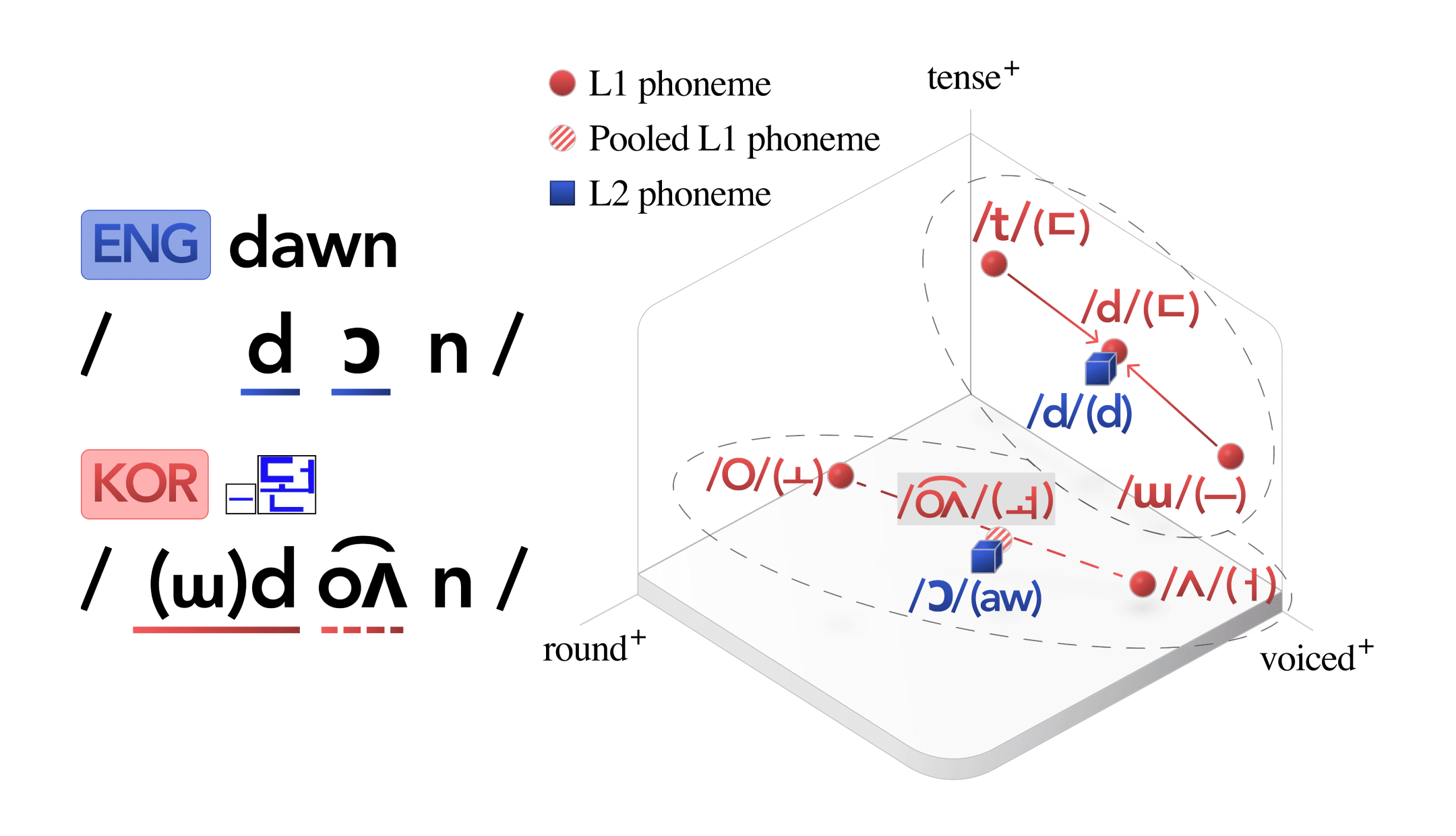

- Solution: Inter-linguistic Phonetic Composition (IPC), a novel computational method that minimizes incorrect phonological transfer by reconstructing L2 phonemes as composite sounds derived from multiple L1 phoneme

Approach

- Developed a phoneme approximation algorithm that transforms phonemes across languages using universal phonological features, regardless of their phoneme inventories or orthographic transparency

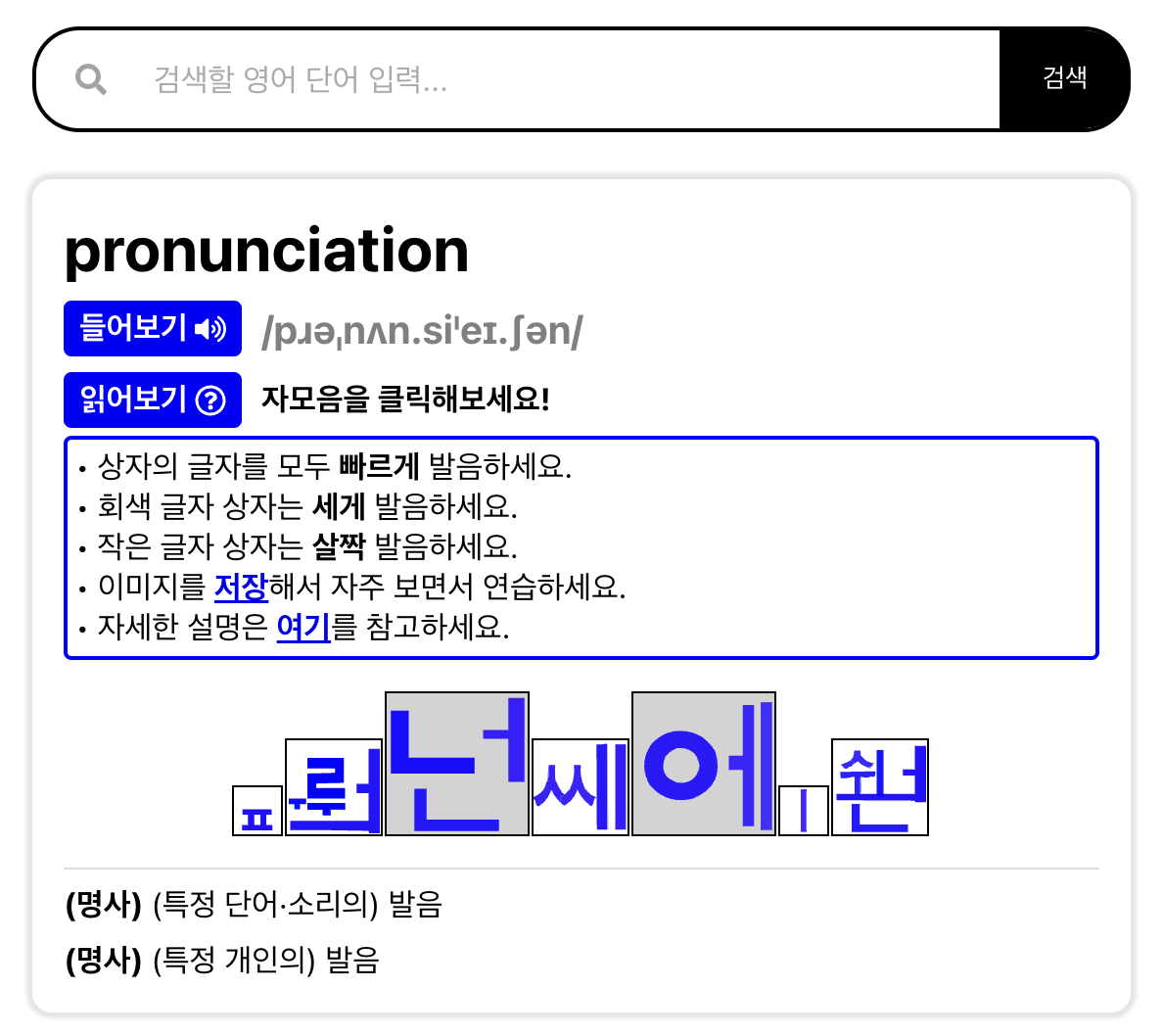

- Created an IPC-based Korean grapheme system that visually encodes English pronunciations using the Korean writing system, Hangul

Results

- Improved the recognition rate of target L2 phonemes by 26% through a ten-minute pronunciation training, compared to when influenced by original phonological transfer patterns

- Validated pronunciation improvements for all 14 English phonemes approximated using the IPC algorithm

- Deployed a publicly accessible web service for pronunciation training